Model interpretation and explanation

Colorizing black boxes

September 1, 2016 — December 22, 2023

The meeting point of differential privacy, accountability, interpretability, the tank detection story, clever horses in machine learning.

Closely related: am I explaining the model so I can see if it is fair?

There is much work; I understand little of it at the moment, but I keep needing to refer to papers, so this notebook exists.

1 Impossibility results

- Cassie Kozyrkov, Explainable AI won’t deliver. Here’s why.

- Wolters Kluwer, peeking into the black box a design perspective on comprehensible ai part 1

- Rudin (2019) argues the opposite.

2 Integrated gradients

Ancona et al. (2017);Sundararajan, Taly, and Yan (2017) Captum implementation seems neat.

3 When do neurons mean something?

-

It would be very convenient if the individual neurons of artificial neural networks corresponded to cleanly interpretable features of the input. For example, in an “ideal” ImageNet classifier, each neuron would fire only in the presence of a specific visual feature, such as the color red, a left-facing curve, or a dog snout. Empirically, in models we have studied, some of the neurons do cleanly map to features. But it isn’t always the case that features correspond so cleanly to neurons, especially in large language models where it actually seems rare for neurons to correspond to clean features. This brings up many questions. Why is it that neurons sometimes align with features and sometimes don’t? Why do some models and tasks have many of these clean neurons, while they’re vanishingly rare in others?

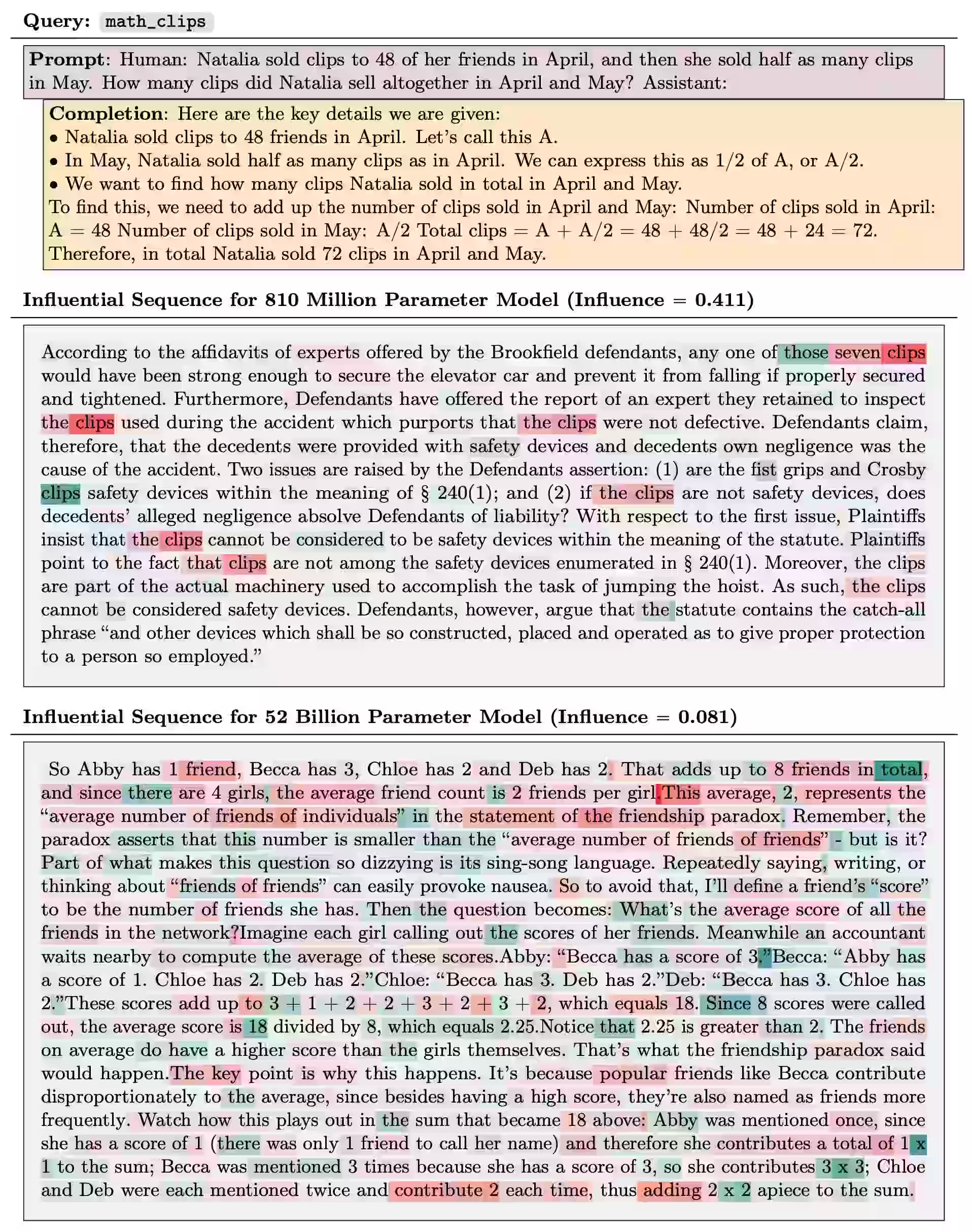

4 Influence functions

If we think about models as interpolators of memorised training data, then the idea of looking at influence functions from individual training data becomes powerful.

5 Shapley values

Shapley values are a fairness technique, which turns out to be applicable to explanation.

Not sure what else happens here, but see (Ghorbani and Zou 2019; Hama, Mase, and Owen 2022; Scott M. Lundberg et al. 2020; Scott M. Lundberg and Lee 2017) for application to both explanation of both data and features.

6 By ablation

Not a fan. But see ablation studies.

7 Incoming

Saphra, Interpretability Creationism

[…] Stochastic Gradient Descent is not literally biological evolution, but post-hoc analysis in machine learning has a lot in common with scientific approaches in biology, and likewise often requires an understanding of the origin of model behavior. Therefore, the following holds whether looking at parasitic brooding behavior or at the inner representations of a neural network: if we do not consider how a system develops, it is difficult to distinguish a pleasing story from a useful analysis. In this piece, I will discuss the tendency towards “interpretability creationism” – interpretability methods that only look at the final state of the model and ignore its evolution over the course of training—and propose a focus on the training process to supplement interpretability research.

-

Interpretable features tend to arise (at a given level of abstraction) if and only if the training distribution is diverse enough (at that level of abstraction).

George Hosu, A Parable Of Explainability

Connection to Gödel: Mathematical paradoxes demonstrate the limits of AI (Colbrook, Antun, and Hansen 2022; Heaven 2019)

Frequently I need the link to LIME, a neat model that uses penalised regression to do local model explanations. (Ribeiro, Singh, and Guestrin 2016) See their blog post.

A cousin of LIME but for tree classifiers is SHAP

The deep dream “activation maximisation” images could sort of be classified as a type of model explanation, e.g. Multifaceted neuron visualization (Nguyen, Yosinski, and Clune 2016)

Belatedly I notice that the Data Skeptic podcast did a whole season on interpretability

How explainable artificial intelligence can help humans innovate

Are Model Explanations Useful in Practice? Rethinking How to Support Human-ML Interactions.

Existing XAI methods are not useful for decision-making. Presenting humans with popular, general-purpose XAI methods does not improve their performance on real-world use cases that motivated the development of these methods. Our negative findings align with those of contemporaneous works.